Can you tell the difference between a real video and a deepfake?

As artificial intelligence continues to advance, deepfakes have emerged as a significant threat to the authenticity of online content. These AI-generated videos can convincingly mimic real people, making it increasingly difficult to distinguish fact from fiction.

In fact, a 2022 survey found that only 57% of global consumers claimed they could detect a deepfake video. As the technology behind deepfakes advances, so too do the tools and techniques designed to detect them.

In this article, we will explore the 8 best deepfake detection tools and techniques available today, which utilize advanced AI algorithms to analyze and detect deepfakes with impressive accuracy. Stay tuned to learn how you can protect yourself and others from the growing threat of deepfakes in the digital age.

Overview of Deepfake Detection

Deepfake Detection is becoming increasingly important as AI and machine learning technology advances, allowing for more and realistic deepfake videos to be created. Deepfake Detection tools and techniques aim to detect alterations in videos, aud, images that have been manipulated oretically generated.

Techniques for detecting fakes include analyzing facial movement voice, and other features to if the video is genuine. Other methods involve using machine learning algorithms to recognize patterns in deepfake videos and distinguish them from ones. Due to the potentially harmful effects of deepfake videos, such as influencing public opinion or manipulating individuals, the development of reliable deepfake detection tools and techniques is becoming more across industries.

Challenges in Deepfake Detection

The rise of deepfakes, artificial audio, images, and videos used to manipulate and misinform, is a growing concern in many industries, including politics, entertainment, and finance. Detecting deepfakes presents a considerable challenge as hackers are becoming more in their ability to create untraceable and high-qualitygeries.

Traditional techniques like image analysis and metadata evaluation are no longer reliable. Some of the major challenges in deepfake detection include generating realistic-looking artifacts that are challenging to identify, large datasets that require time and to train algorithms, and being able to differentiate between real and fake audio and video in uncontrolled environments.

Deepfake detection techniques need to be able to operate quickly, detect subtle changes, and be easily integrated with existing infrastructure to be. Finding solutions to these challenges will be crucial in the fight against deepfakes.

Types Deepfakes

Deepfakes come in types, each with its own level of sophistication and complexity. One type of deepfake involves replacing existing face in a video with another face, while another type involves creating a completely new one. There are also deep fakes that involve manipulating audio to create a fake voice or altering the context of a video to create a false narrative. In addition, there are deepfakes in static images, such as altered photos or realistic computer-generated faces.

List of Best Deepfake Detection Tools and Techniques

Each of these tools, from Intel's Real-Time Deepfake Detector, a pioneering solution that leverages subtle “blood flow” changes in video pixels, to the innovative Deepfake Detection Using Phoneme-Viseme Mismatches technique, represents a unique front in the battle against deepfakes.

The review also explores the extensive capabilities of Microsoft's Video Authenticator, Sentinel, Deepware Scanner, WeVerify Deepfake Detection, Sensity, and Reality Defender. Each tool offers a unique approach to deepfake detection, providing a comprehensive defence against this escalating threat.

Stay with us as we will closely examine each tool, providing a thorough understanding of its functionalities and role in combating deepfakes.

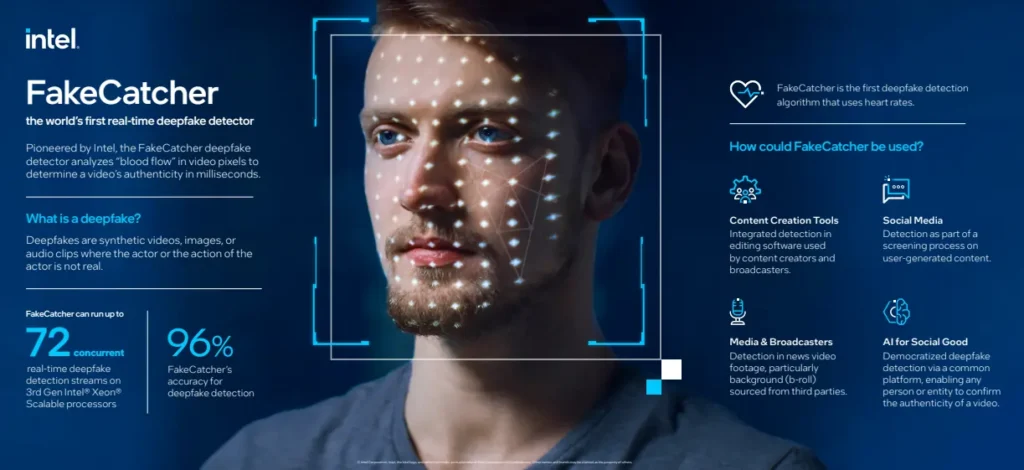

1. Intel’s Real-Time Deepfake Detector

Intel's Real-Time Deepfake Detector, known as FakeCatcher, emerges as a groundbreaking solution. This innovative technology, developed in collaboration with the State University of New York at Binghamton, is capable of detecting fake videos with an impressive 96% accuracy rate, with real-time results. By using Intel's advanced hardware and software, FakeCatcher is a powerful tool that can restore trust in digital media by distinguishing between real and manipulated content.

FakeCatcher operates by identifying authentic clues in real videos, such as the subtle “blood flow” changes in the pixels of a video. When our hearts pump blood, our veins change colour, and these blood flow signals are collected from all over the face. Algorithms then translate these signals into spatiotemporal maps, and with the help of deep learning models, FakeCatcher can instantly determine whether a video is real or fake.

Key Features of Intel's Real-Time Deepfake Detector

- Can detect fake videos with a 96% accuracy rate

- Returns result in milliseconds

- Uses subtle “blood flow” in the pixels of a video to detect deepfakes

- Runs on Intel hardware and software, interfacing through a web-based platform

2. Microsoft Video Authenticator

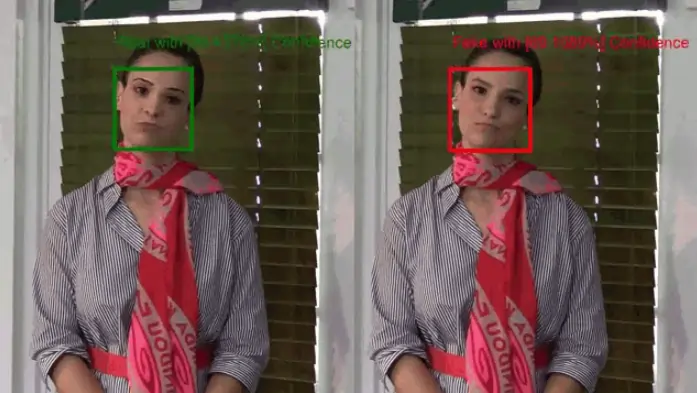

Microsoft's Video Authenticator is an advanced Deepfake detector tool, developed by the tech giant's Research and Responsible AI team. It is designed to analyze still photos or videos and provide a real-time confidence score indicating the likelihood of artificial manipulation. Microsoft’s Video Authenticator tool is successfully tested on leading models for training and testing deepfake detection technologies.

It's a powerful weapon in the fight against disinformation, capable of detecting the blending boundary of deepfakes and subtle grayscale changes that are often undetectable to the human eye.

Through strategic partnerships with organizations like the AI Foundation and media companies such as the BBC and the New York Times, Microsoft is ensuring that this technology is widely adopted and used responsibly.

Key Features of Microsoft Video Authenticator

- Provides a real-time confidence score

- Detects subtle grayscale changes

- Allows for immediate detection of deepfakes

- Partnerships with AI Foundation, media companies, and more for responsible use and wide adoption

3. Sentinel

Sentinel, a Deepfake detection technology is designed for democratic governments, defence agencies, and enterprises; Sentinel offers an AI-based protection platform that combats the threat of deepfakes. Leveraged by leading organizations across Europe, Sentinel's technology provides an automated solution to detect AI-generated forgeries in digital media, ensuring the integrity of your information.

Sentinel's deepfake detection technology is not just a tool, but a shield. It allows users to upload digital media, which is then scrutinized for any signs of AI manipulation.

If a deepfake is detected, Sentinel provides a detailed visualization of the manipulation, allowing users to see exactly where and how the media has been altered. With Sentinel, you're not just detecting deepfakes, you're defending the truth.

Key Features of Sentinel

- Automated analysis of uploaded digital media

- Detailed visualization of detected manipulations

- Largest database of verified deepfakes

- Multi-layer defence for high accuracy

- AI-generated audio classification

- Ensemble of neural network classifiers

4. Deepware Scanner

Deepware Scanner is an open-source forensic tool; it has been at the forefront of deepfake research since 2018, developing powerful methods to detect them. This tool is unique, having been rigorously tested on multiple data sources, including organic and live videos.

Deepware Scanner is built on the EfficientNet-B7 model of the convolutional neural network architecture. This model, known for its uniform scaling of all CNN dimensions, ensures higher accuracy and cost-efficiency. The primary dataset used is the CFDF dataset, which contains 120,000 consented videos. Test datasets include 4chan Real, MrDeepFakes, Celeb-DF YouTube, and others, making Deepware Scanner a comprehensive tool for deepfake detection.

Key Features of Deepware Scanner

- Open-source Deepfake detection tool

- Based on the EfficientNet-B7 model

- Uses CFDF dataset with 120,000 consented videos

- Tested on multiple datasets like MrDeepFakes, Celeb-DF YouTube, and 4chan Real

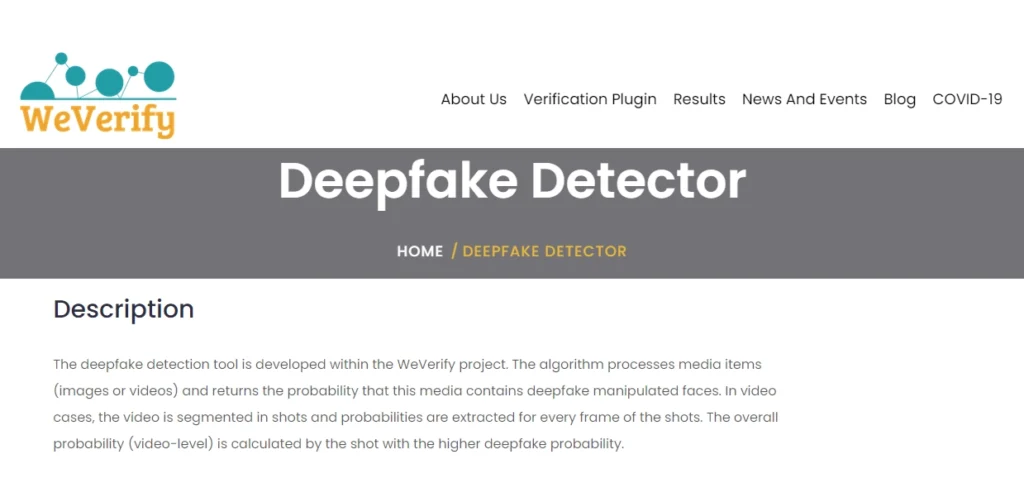

5. WeVerify Deepfake Detection

The WeVerify Deepfake Detection Tool is a robust solution against Deepfake technology. This tool, developed within the WeVerify project, leverages advanced algorithms to analyze media items and determine the probability of deepfake manipulation. Whether you're dealing with real images or videos, WeVerify provides a comprehensive analysis, segmenting videos into shots and extracting probabilities for every frame.

The overall deepfake probability is calculated based on the shot with the highest deepfake probability, ensuring a thorough and accurate assessment.

Available as a standalone demo and a REST API, WeVerify can be seamlessly integrated into various platforms. The project's primary aim is to develop intelligent human-in-the-loop content verification and disinformation analysis methods and tools. By analyzing and contextualizing social media and web content, WeVerify exposes fabricated content, contributing to a safer and more trustworthy online ecosystem.

Key Features of WeVerify

- Deepfake detection for input images and videos

- Comprehensive analysis with frame-by-frame probability extraction

- Intelligent human-in-the-loop content verification

- Disinformation analysis methods and tools

- A blockchain-based public database of known fakes

6. Sensity

Sensity, the leading provider in combating the rising concern of deepfake technology, offers an impressive solution. Their deepfake detection API, developed in-house, is specifically crafted to analyze real image and video files, effectively identifying the latest Artificial Intelligence-driven techniques for media manipulation and synthesis. From fabricated human faces in social media profiles to convincing face swaps in videos, Sensity's advanced system possesses the capability to expose these deceptive practices.

Sensity's detectors have been meticulously trained on millions of artificially generated images sourced from various online platforms. This extensive training equips them with the expertise to identify the distinct artefacts and high-frequency signals commonly associated with deepfake images.

With astounding accuracy, Sensity's detection capabilities extend to renowned AI models like Dall-E, Stable Diffusion, and Mid Journey. Consequently, Sensity emerges as the reliable choice for both businesses and individuals seeking to safeguard their digital media against the perils of deepfakes.

Key Features of Sensity

- Deepfake Detection: Analyze image and video files for AI-based media manipulation

- GAN: Spot synthetic identities like facial expressions and preserve poses generated by GANs used as fake personas and bot accounts

- Detecting AI-Generated Images: Detect AI-generated models with 95.8% accuracy

- Face Swap: Detect deepfakes used for identity theft and KYC process spoofing

7. Reality Defender

This detection platform, a brainchild of some of the most proficient teams in machine learning and computer vision research, uses deep learning algorithms and offers a robust shield against the potential harm of deepfakes and generative content.

As an independent observer, I can attest that Reality Defender is not just a tool for enterprises, platforms, or government entities. It's a security system that provides real-time detection of deepfakes, a crucial feature in our rapidly changing digital world.

The platform's advanced toolsets, capable of indexing billions of assets, are designed to combat even the most sophisticated threats. The turn-key defence system is impressive, it can be integrated into your existing setup via an encrypted API or you can scan on their deepfake software app.

Moreover, the platform's real-time risk scoring, email alerts, and forensic review reports ensure that users are always informed and prepared.

Key Features of Reality Defender

- Best-in-class deepfake detection

- Real-time scanning of images, videos, and audio

- Comprehensive web app for deepfake detection

- Government-grade detection platform

- Real-time risk scoring, email alerts, and forensic review reports

- Encrypted API for turn-key defence

- Indexes billions of assets to protect against advanced threats

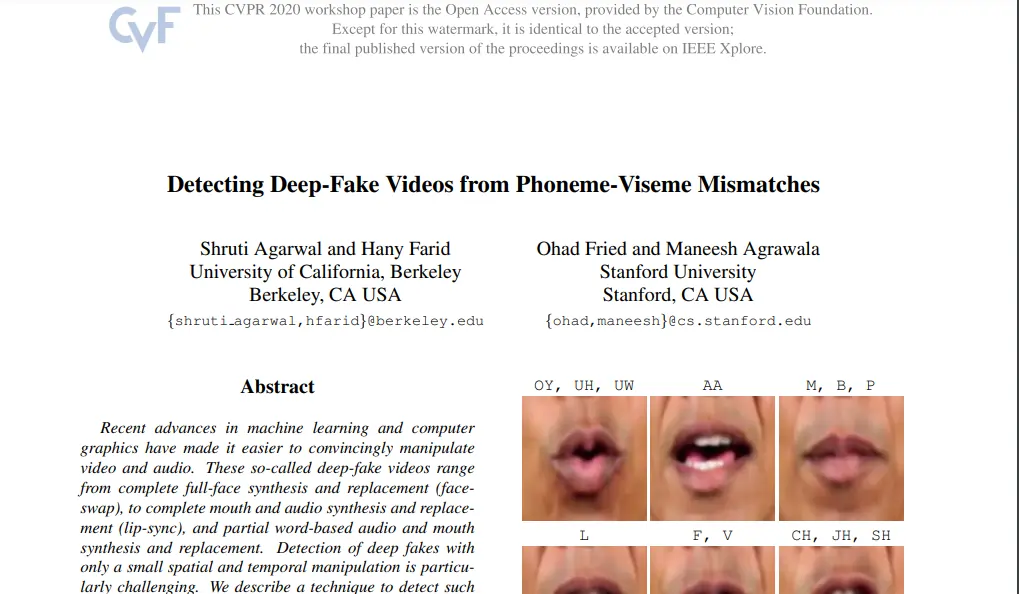

8. Deepfake Detection Using Phoneme-Viseme Mismatches

Deepfake Detection Using Phoneme-Viseme Mismatches is a scientific technique and groundbreaking solution to the growing problem of deepfake videos. Developed by the brilliant minds at Stanford University and the University of California, this model is a game-changer for organizations and individuals concerned with the integrity of digital media.

This model can detect artificial facial features and exploits the inconsistencies between visemes, the dynamics of mouth shape, and spoken phonemes. It's a powerful technique for detecting even the most subtle and localized manipulations in deepfake videos.

With impressive accuracy rates for both manual and automatic video authentication, this deepfake detection technique can be your reliable ally in the fight against deepfake manipulation.

Key Benefits of this Technique

- Capable of detecting spatially small and temporally localized manipulations

- Used for both manual and automatic video authentication

- Showed an accuracy of 96.0%, 97.8%, and 97.4% for manual authentication

- Showed an accuracy of 93.4%, 97.0%, and 92.8% for automatic authentication

Ethical Considerations and Implications of Deepfake Detection

As the use of deepakes becomes more widespread, it is important to consider the ethical implications of detecting them. While deep detection tools may help prevent the spread of misleading or harmful content, there is a risk that they may be used for unethical purposes as surveillance or censorship.

Additionally, the use of these raises questions about privacy and consent, as individuals may not be that their images or videos are being used in this way. As such, it is important to approach deepfake detection with and to the potential consequences of both detecting or failing to detect these deceptive.

Final Note

As deepfake technology continues to evolve, it's crucial for individuals, organizations, and governments to stay informed and proactive in addressing the ethical implications and potential misuse of this powerful tool.

In the face of this growing threat, the development of deepfake detection tools and techniques is more important than ever.

As we strive to maintain trust in our digital world, we must also ask ourselves: How can we ensure that the benefits of deepfake technology are harnessed for good, while minimizing the risks? What role do policymakers, tech companies, and individuals play in addressing the challenges posed by deepfakes? And ultimately, can we create a future where deepfake technology is used ethically and responsibly, without compromising the integrity of our shared reality?